The Equivalence of Weighted Kappa and the Intraclass Correlation Coefficient as Measures of Reliability - Joseph L. Fleiss, Jacob Cohen, 1973

Systematic literature reviews in software engineering—enhancement of the study selection process using Cohen's Kappa statistic - ScienceDirect

GitHub - thomaspingel/cohens-kappa-matlab: This is a simple implementation of Cohen's Kappa statistic, which measures agreement for two judges for values on a nominal scale. See the Wikipedia entry for a quick overview,

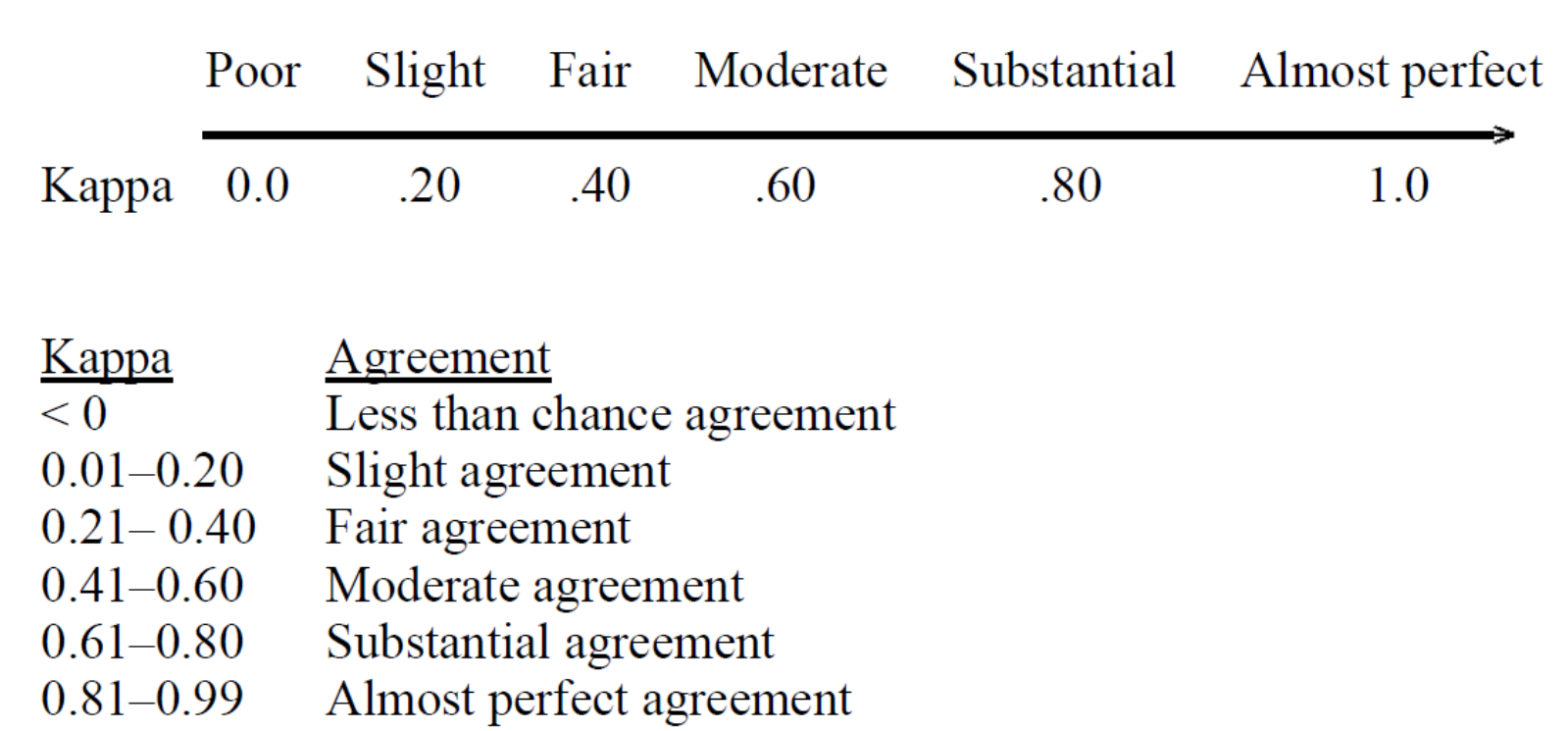

Agree or Disagree? A Demonstration of An Alternative Statistic to Cohen's Kappa for Measuring the Extent and Reliability of Ag

Interrater agreement statistics with skewed data: evaluation of alternatives to Cohen's kappa. | Semantic Scholar